- NetWitness Community

- Blog

- Extracting Event Time from Logs

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Last Updated: 12:41 February 27th 2017

Latest Version: 17

I had a customer who wishes to extract the raw event time for particular logs. This is because they use this raw event time for further analysis of events in a third party system. The raw event time may differ greatly from the actual log decoder processing time, especially if there is a delay in the logs reaching the log decoder, perhaps due to network latency or file collection delays.

Currently they use the event.time field. However this has some limitations:

- If the timestamps are incomplete then this field is empty. For example many Unix systems generate a timestamp that does not contain the year.

- Even for the same device types, event source date formats can be different. For example US based system may log the date in MM/DD/YY format, where as a UK based system may log the date in DD/MM/YY format. A date of 1/2/2017 could be interpreted as either the 1st February 2017 or the 2nd January 2017.

- The event.time field is actually a 64 bit TimeT field which can not be manipulated within the 32 bit LUA engine that currently ships with the product.

All these issues are being addressed in future releases of the product, but the method outlines here gives something that can be used today.

Create some new meta keys for our Concentrators

We add the following to the index-concentrator-custom.xml files:

<key description="Epoch Time" level="IndexValues" name="epoch.time"format="UInt32" defaultAction="Open" valueMax="100000" />

<key description="Event Time String" level="IndexValues" name="eventtimestr" format="Text" valueMax="2500000"/>

<key description="UTCAdjust" level="IndexValues" name="UTCAdjust" format="Float32" valueMax="1000"/>

The meta key epoch.time will be used to store the raw event time in Unix Epoch format. This is seconds since 1970.

The meta key event.time.str will be used to store a timestamp that we create in the next step.

The meta key UTCAdjust will hold how many hours to add or remove from our timestamp.

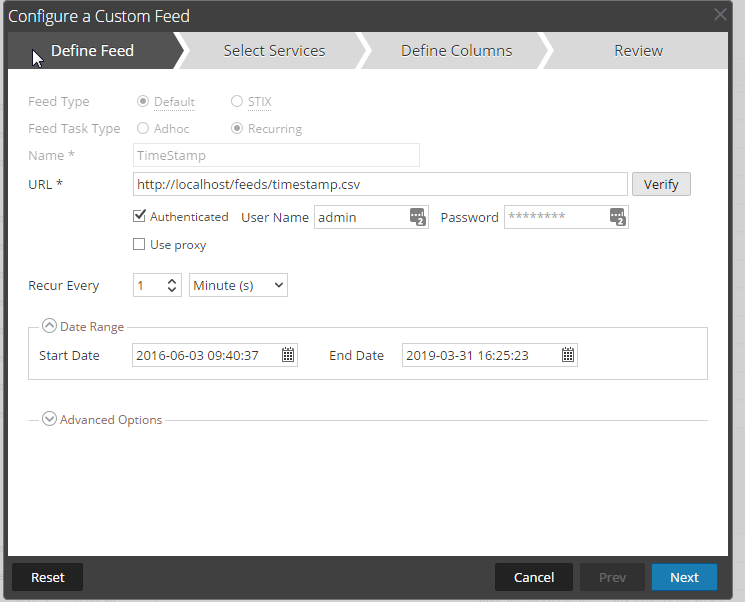

Create a Feed to tag events with a timestamp

Within the Netwitness LUA parser we are restricted on what functions we can use. As a result the os.date functions are not available, so we need another method of getting a timestamp for our logs.

To do this, create a cronjob on the SA Server that will run every minute and populate the following CSV file.

#!/bin/bash

# Script to write a timestamp in a feed file

devicetypes="rsasecurityanalytics rhlinux securityanalytics infobloxnios apache snort squid lotusdomino rsa_security_analytics_esa websense netwitnessspectrum bluecoatproxyav alcatelomniswitch vssmonitoring voyence symantecintruder sophos radwaredp ironmail checkpointfw1 websense rhlinux damballa snort cacheflowelff winevent_nic websense82 fortinet unknown"

for i in {1..60}

do

mydate=$(date -u)

echo "#Device Type, Timestamp" >/var/netwitness/srv/www/feeds/timestamp.csv

for j in $devicetypes

do

echo "$j",$mydate >>/var/netwitness/srv/www/feeds/timestamp.csv

done

sleep 1

done

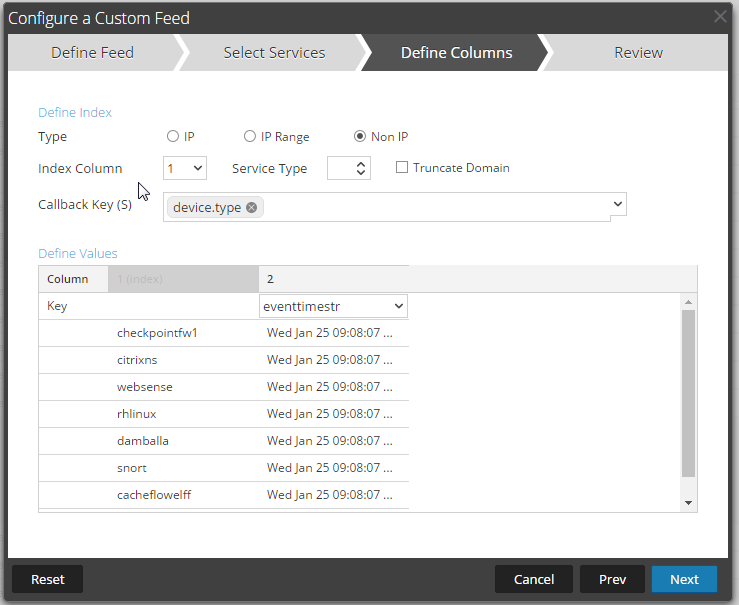

This will generate a CSV file that we can use as a feed with the following format

checkpointfw1,Wed Jan 25 09:17:49 UTC 2017

citrixns,Wed Jan 25 09:17:49 UTC 2017

websense,Wed Jan 25 09:17:49 UTC 2017

rhlinux,Wed Jan 25 09:17:49 UTC 2017

damballa,Wed Jan 25 09:17:49 UTC 2017

snort,Wed Jan 25 09:17:49 UTC 2017

cacheflowelff,Wed Jan 25 09:17:49 UTC 2017

winevent_nic,Wed Jan 25 09:17:49 UTC 2017

Here the first column of the csv is our device.type and the part after the column is our UTC timestamp.

We use this as a feed which we push to our Log decoders.

The CSV file is created every minute, and we also refresh the feed every minute. This means that potentially this timestamp could be 2 minutes out of date compared with our logs.

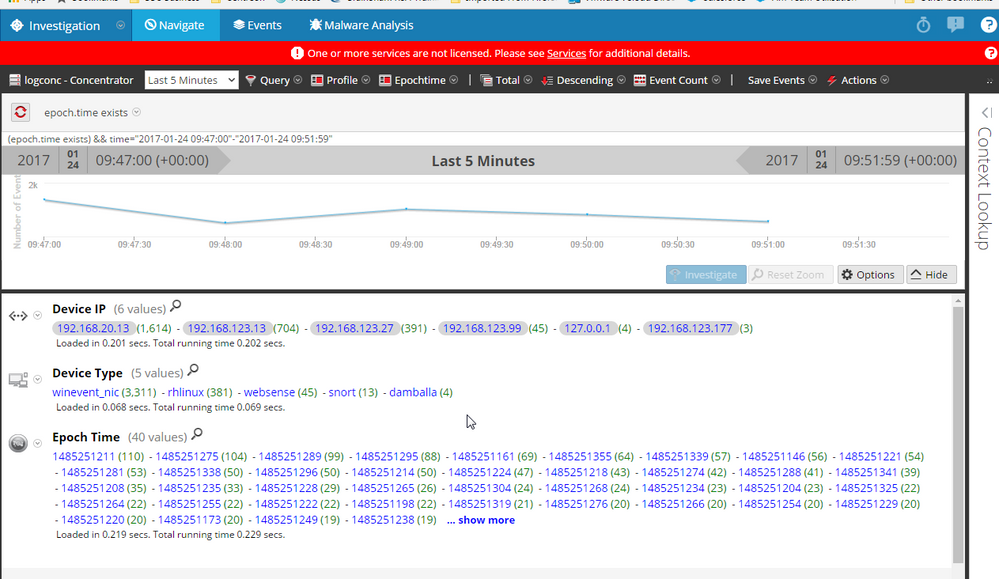

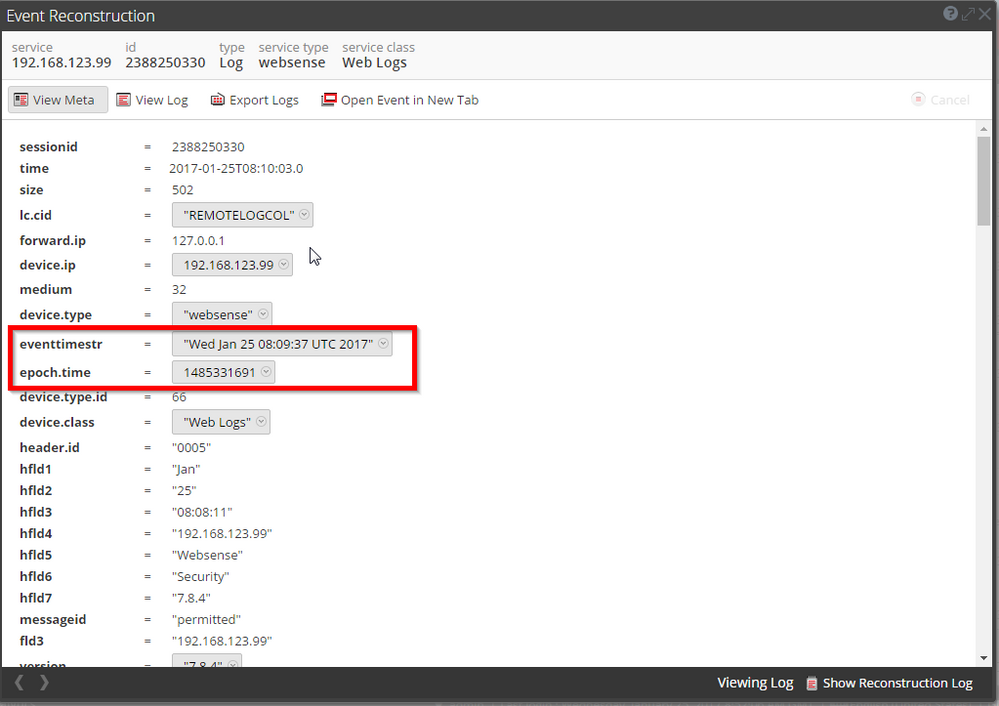

Here is an example of the timestamp visible in our websense logs:

eventtimestr holds our dummy timestamp

epoch.time holds the actual epoch time that the raw log was generated.

Create an App Rule to Tag Session without a UTC Time with an alert.

Create an App Rule on your log decoders that will generate an Alert if the no UTCAdjust metakey exists. This prevents you having to define UTC Offsets of 0 for your devices when they are already logging in UTC.

It is important that the following are entered for the rule:

Rule Name:UTC Offset Not Specified

Condition:UTCAdjust !exists

Alert on Alert

Alert box is ticked.

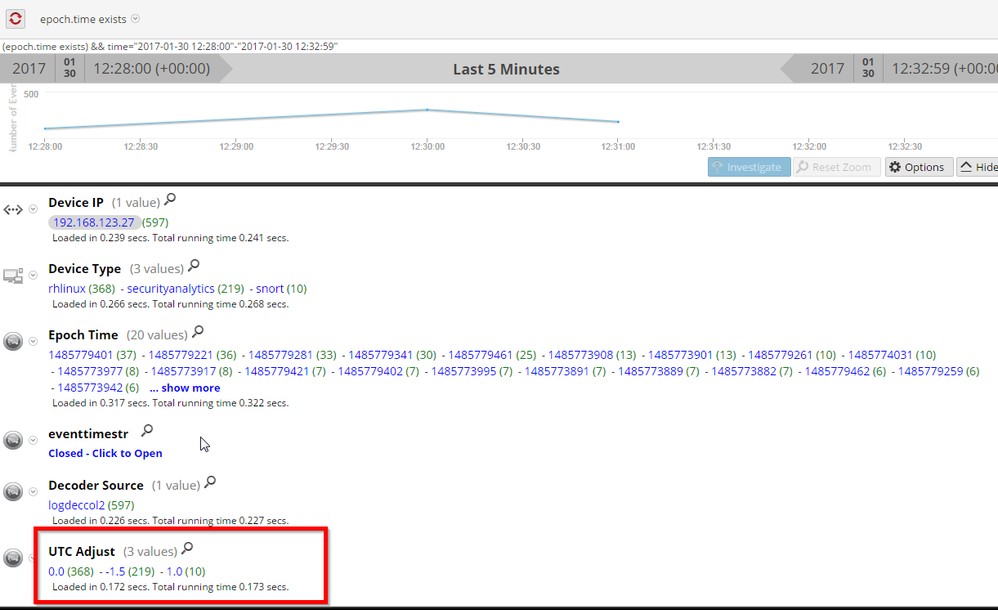

Create a feed to specify how to adjust the calculated time on a per device ip and device type setting

Create a CSV file with the following columns:

#DeviceIP,DeviceType,UTC Offset

192.168.123.27,rhlinux,0

192.168.123.27,snort,1.0

192.168.123.27,securityanalytics,-1.5

Copy the attached UTCAdjust.xml. This is the feed definition file for a Multi-indexed feed that uses the device.ip and device.type. We are unable to do this through the GUI.

<?xml version="1.0" encoding="UTF-8" standalone="yes"?>

<FDF xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:noNamespaceSchemaLocation="feed-definitions.xsd">

<FlatFileFeed comment="#" separator="," path="UTCAdjust.csv" name="UTCAdjust">

<MetaCallback name="Device IP" valuetype="IPv4">

<Meta name="device.ip"/>

</MetaCallback>

<MetaCallback name="DeviceType" valuetype="Text" ignorecase="true">

<Meta name="device.type"/>

</MetaCallback>

<LanguageKeys>

<LanguageKey valuetype="Float32" name="UTCAdjust"/>

</LanguageKeys>

<Fields>

<Field type="index" index="1" key="Device IP"/>

<Field type="index" index="2" key="DeviceType"/>

<Field key="UTCAdjust" type="value" index="3"/>

</Fields>

</FlatFileFeed>

</FDF>

Run the UTCAdjustFeed.sh script to generate the feed. This generates the actual feed and then copies it to the correct directories on any log and packet decoders. (There really isn't any reason to copy it to a packet decoder). This script will download the feed from a CSV file hosted on a webserver. This script could be scheduled as a cronjob depending on how often it needs to be updated.

wget http://localhost/feeds/UTCAdjust.csv -O /root/feeds/UTCAdjust.csv --no-check-certificate

find /root/feeds | grep xml >/tmp/feeds

for feed in $(cat /tmp/feeds)

do

FEEDDIR=$(dirname $feed)

FEEDNAME=$(basename $feed)

echo $FEEDDIR

echo $FEEDNAME

cd $FEEDDIR

NwConsole -c "feed create $FEEDNAME" -c "exit"

done

scp *.feed root@192.168.123.3:/etc/netwitness/ng/feeds

scp *.feed root@192.168.123.2:/etc/netwitness/ng/feeds

scp *.feed root@192.168.123.44:/etc/netwitness/ng/feeds

NwConsole -c "login 192.168.123.2:50004 admin netwitness" -c "/decoder/parsers feed op=notify" -c "exit"

NwConsole -c "login 192.168.123.3:50002 admin netwitness" -c "/decoder/parsers feed op=notify" -c "exit"

NwConsole -c "login 192.168.123.44:50002 admin netwitness" -c "/decoder/parsers feed op=notify" -c "exit"

Use a LUA parser to extract the event time and then calculate Epoch time.

We then use a LUA parser to extract the raw log and then calculate epoch time. Our approach to do this is as follows:

- Define the device types that we are interested in

- Create a regular expression to extract the timestamps for the logs we are interested in

- From this timestamp add additional information to calcualate the epoch time. For example for timestamps without a year, I assume the current year and then check that this does not create a date that is too far into the future. If the date is too far in the future, then I use the previous year. This should account for logs around the December 31st / 1st January boundary.

- Finally adjust the calculated time depending on the UTC Offset feed.

I've attached and copied the code below. This should be placed in /etc/netwitness/ng/parsers on your log decoders.

Currently this LUA parser supports:

- windows event logs

- damballa logs

- snort logs

- rhlinux

- websense

The parser could be expanded further to account for different timestamp formats for particular device.ip values. You could create another feed to tag the locale of your devices and then use this within the LUA parser to make decisions about how to process dates.

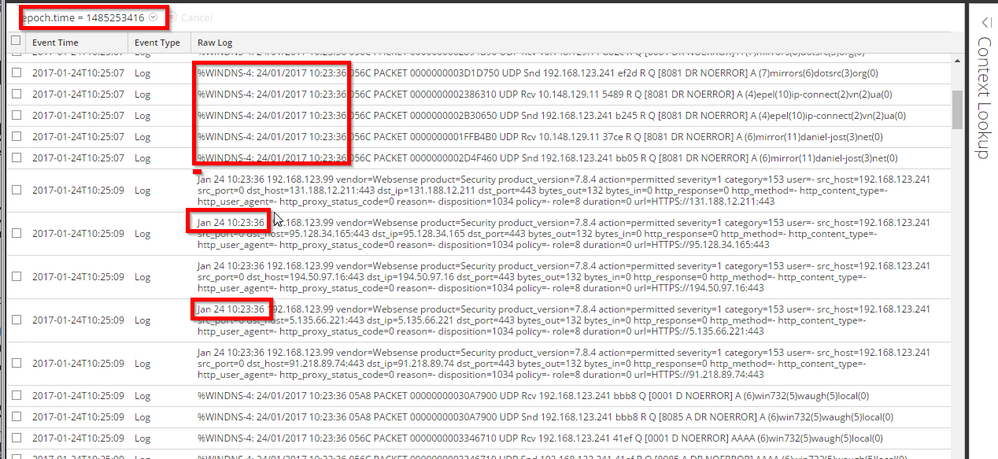

Here is the finished result:

I can investigate on epoch.time.

For example: 1485253416 is Epoch Converter - Unix Timestamp Converter

GMT: Tue, 24 Jan 2017 10:23:36 GMT

and all the logs have an event time in them of 24th January 10:23:36

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

- Using NetWitness to Detect Phishing reCAPTCHA Campaign

- Netwitness Platform Integration with Amazon Elastic Kubernetes Service

- Netwitness Platform Integration with MS Azure Sentinel Incidents

- Netwitness Platform Integration with AWS Application Load Balancer Access logs

- The Sky Is Crying: The Wake of the 19 JUL 2024 CrowdStrike Content Update for Microsoft Windows and ...

- The Sky Is Crying: The Wake of the 19 JUL 2024 CrowdStrike Content Update for Microsoft Windows and ...

- New HotFix: Addresses Kernel Panic After Upgrading to 12.4.1

- Automation with NetWitness: Core and NetWitness APIs

- HYDRA Brute Force

- DDoS using BotNet Use Case

-

Announcements

64 -

Events

12 -

Features

12 -

Integrations

15 -

Resources

68 -

Tutorials

32 -

Use Cases

31 -

Videos

119

![[ADM] _ldecoder_ config_2017-02-06_10-21-59.png [ADM] _ldecoder_ config_2017-02-06_10-21-59.png](/t5/image/serverpage/image-id/25320i5834A31B36D3ADF3/image-size/large?v=v2&px=999)